The human voice carries far more than words. Beneath every sentence lies a rich layer of emotional data—tone, pitch, rhythm, intensity, and subtle vocal tremors—that reveal what a person truly feels. Speech emotion AI platforms like Beyond Verbal have emerged to decode these signals, transforming ordinary voice recordings into powerful emotional insights. From healthcare and customer service to automotive systems and market research, voice emotion analysis is rapidly becoming one of the most compelling frontiers in artificial intelligence.

TLDR: Speech emotion AI platforms analyze vocal patterns to detect emotions such as happiness, stress, anger, or confidence without relying on the meaning of words. Technologies like Beyond Verbal use advanced signal processing and machine learning to extract emotional cues from tone, pitch, and rhythm. These tools are transforming industries including healthcare, customer experience, finance, and automotive technology. However, ethical considerations around privacy and consent remain critical as the technology evolves.

Unlike traditional speech recognition systems that focus on what is being said, emotion AI prioritizes how it is being said. This distinction is powerful. Words can be carefully chosen or even deceptive, but vocal patterns are far more difficult to consciously manipulate. That is why emotional analytics derived from voice signals can provide deeper and often more authentic insights than text-based sentiment analysis alone.

How Speech Emotion AI Works

All Heading

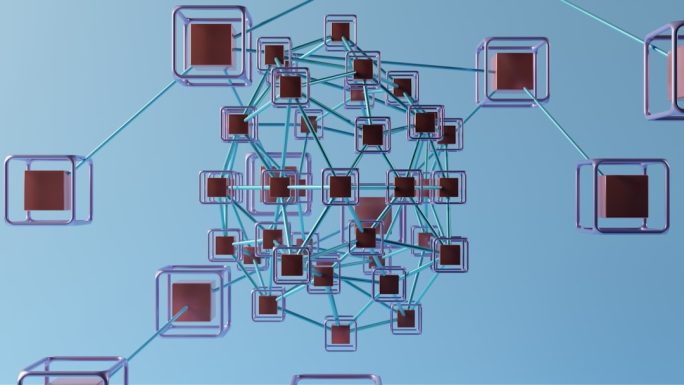

At its core, speech emotion AI operates through a combination of signal processing and machine learning. Voice recordings are broken down into tiny acoustic segments, and algorithms analyze multiple parameters simultaneously.

- Pitch: Variations in frequency that can indicate excitement, anxiety, or calmness.

- Intensity: Loudness levels associated with confidence, anger, or enthusiasm.

- Speech Rate: Faster or slower patterns that may signal stress or thoughtfulness.

- Voice Quality: Subtle changes such as breathiness or tension.

- Rhythmic Patterns: Pauses, hesitations, and emphasis.

These features are converted into quantitative markers. Machine learning models—often trained on vast, culturally diverse datasets—identify patterns that correlate with specific emotional states. The result is an emotional “fingerprint” that evolves as the conversation unfolds.

Unlike simple sentiment analysis that categorizes speech as positive or negative, advanced platforms detect nuanced emotional dimensions such as:

- Arousal (calm vs. excited)

- Valence (positive vs. negative emotional state)

- Mood stability

- Engagement levels

- Cognitive load

This multidimensional approach allows organizations to move beyond surface-level understanding and uncover deeper psychological insights.

Beyond Verbal and the Rise of Vocal Biomarkers

Beyond Verbal is often cited as a pioneer in this space because it focuses exclusively on the acoustic properties of speech rather than language content. Its technology extracts what are known as vocal biomarkers—measurable vocal traits linked to emotional and physiological conditions.

Vocal biomarkers operate similarly to physical biomarkers like heart rate or blood pressure. Subtle changes in voice production can reflect stress, fatigue, depression, or even early signs of neurological conditions. By analyzing these patterns over time, AI platforms can detect anomalies or emotional shifts that may not be visible through text analysis or self-reporting.

This approach has significant implications for:

- Mental health monitoring

- Remote patient assessments

- Early detection of cognitive decline

- Insurance risk analysis

Importantly, these systems do not necessarily need to understand the spoken language. This language-agnostic capability makes them adaptable across global markets.

Applications Across Industries

Speech emotion AI is no longer confined to research labs. It has moved decisively into mainstream enterprise applications.

1. Customer Experience and Call Centers

Call centers were among the earliest adopters of vocal emotion analytics. By integrating AI into live calls, companies can:

- Detect customer frustration in real time

- Alert supervisors before escalation occurs

- Provide agents with emotional guidance prompts

- Improve training based on emotional performance metrics

For example, if a customer’s stress level spikes during a billing discussion, AI systems can recommend calming language strategies to the representative.

2. Healthcare and Telemedicine

In healthcare, voice emotion AI is particularly promising. Depression, anxiety, Parkinson’s disease, and Alzheimer’s can all affect vocal characteristics. Continuous voice monitoring through apps or telehealth sessions can offer subtle early warnings.

Because voice data can be captured passively during natural conversation, it allows for non-invasive longitudinal monitoring. This is especially valuable in remote or underserved areas where frequent clinical visits are impractical.

3. Automotive and Smart Assistants

Emotion-aware AI is also transforming vehicles and virtual assistants. Imagine a car that detects driver fatigue or agitation through vocal cues and responds by adjusting music, activating safety alerts, or suggesting a break.

Similarly, virtual assistants equipped with emotional intelligence can respond more empathetically. Instead of giving neutral responses, they can adapt tone and pacing to better match the user’s emotional state.

4. Financial Services

Banks and financial institutions use speech emotion AI to assess risk and authenticity. Stress detection during loan interviews or insurance claims can help identify inconsistencies. While not definitive proof of deception, emotional irregularities may prompt further review.

5. Market Research and Media Testing

Advertisers are increasingly measuring audience reactions through voice analysis. By tracking emotional engagement during focus groups or interviews, brands can fine-tune messaging for maximum resonance.

The Science Behind Emotional Detection

The human voice is produced through a complex interaction of the lungs, vocal cords, and resonating chambers in the throat and mouth. Emotions influence muscle tension, breathing patterns, and neural signals, all of which subtly alter vocal output.

When someone is stressed, for example:

- Breathing may become shallow or rapid.

- Vocal cords may tighten, raising pitch.

- Speech rate may accelerate.

- Micro-tremors may increase.

AI systems measure these micro-variations at a scale undetectable to the human ear. Over time, they establish baseline emotional profiles and identify deviations that indicate mood shifts or heightened cognitive strain.

Ethical Considerations and Privacy Challenges

Despite its promise, speech emotion AI raises serious ethical questions. Voice is deeply personal. Emotional profiling without informed consent risks undermining trust and autonomy.

Key ethical concerns include:

- Consent: Are individuals aware their emotional data is being analyzed?

- Data Security: How securely is sensitive voice data stored?

- Bias: Are training datasets culturally diverse enough to avoid misinterpretation?

- Overreach: Could emotional data be misused in hiring or law enforcement?

Accents, cultural speech patterns, and neurodiversity can affect vocal expression. Poorly designed systems may misclassify emotions, leading to unfair conclusions. Responsible deployment requires transparency, explainability, and regulatory oversight.

Accuracy and Limitations

While advancements are impressive, speech emotion AI is not infallible. Emotions are complex and often blended. A single vocal characteristic rarely defines a specific feeling; instead, AI must interpret clusters of features.

Additional limitations include:

- Background noise interference

- Poor recording quality

- Cultural variation in emotional expression

- Intentional voice modulation

Therefore, experts caution against using vocal emotion analysis as a standalone diagnostic or decision-making tool. It is most effective when combined with contextual data and human oversight.

The Future of Emotionally Intelligent AI

The next generation of speech emotion AI platforms is likely to integrate multimodal data—combining voice, facial expression, physiological signals, and contextual information. This layered approach will enhance reliability and enable richer emotional mapping.

We may soon see:

- Real-time emotional dashboards in corporate environments

- Emotion-adaptive educational platforms tailoring lessons to student engagement

- Continuous mental health monitoring through wearable-connected apps

- Hyper-personalized digital assistants capable of empathetic dialogue

As computational power increases and datasets expand, models will become more culturally inclusive and context-aware. However, technological sophistication must be matched with thoughtful governance.

Conclusion

Speech emotion AI platforms like Beyond Verbal represent a profound shift in how technology understands human communication. By decoding the hidden emotional signals in voice, these systems unlock insights that were once accessible only through intuition or psychological expertise. Their applications span customer service, healthcare, automotive innovation, financial risk assessment, and beyond.

At the same time, the very power of emotional analytics demands careful handling. Voice is not merely sound—it is identity, mood, vulnerability, and intent intertwined. As organizations increasingly adopt speech emotion AI, balancing innovation with ethics will determine whether this technology enhances human connection or compromises it.

Ultimately, the promise of speech emotion AI lies not in replacing human empathy, but in augmenting it. When used responsibly, these platforms can help institutions listen more carefully, respond more thoughtfully, and better understand the emotional dimensions that shape every conversation.

Recent Comments